Education and Technology

As technology has evolved, and as keeping up with the newest and fastest technology has increased expenditures (even though some technologies have dropped in price), policymakers have become ever more insistent that technology costs must be justified by data that demonstrate increases in student achievement. To this situation, add the fact that the education climate overall has shifted toward increased accountability for schools. Together, these changes have created the expectation that technology be used to make schools more effective through the use of data to make decisions, noted by experts from https://customwriting.com/. This kind of technology use involves systemic changes in schools — including changes in instruction, assessment, expectations, teacher and student roles, and administrative direction.

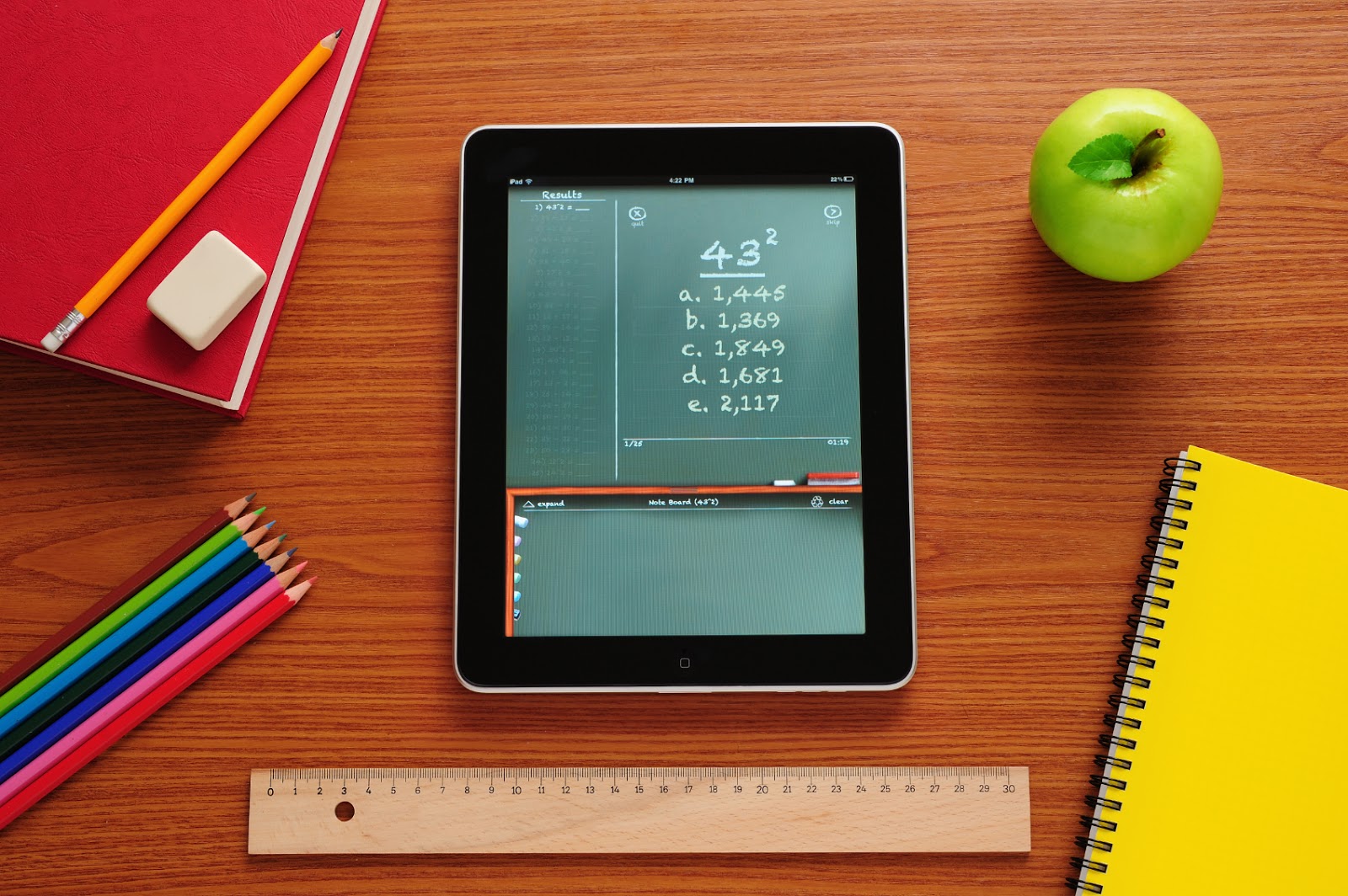

With recent technology, it now is possible to monitor the achievement and follow the progress of individual students on standardized tests. This form of accountability is popular with policymakers for its straightforwardness and its ostensible equity. The landmark No Child Left Behind (NCLB) Act specifically addresses technology as a way to enhance education. It establishes the goal that all students will be technologically literate by the end of eighth grade. The U.S. Department of Education has an Office of Educational Technology and has awarded $15 million in grants earlier this year to states for, among other things, the study of technology’s impact on student achievement. Technology is part of our society’s daily life, and the education community is working to ensure that technology is effectively incorporated into our education system. The most important issues for educators today revolve around using information technology effectively to make a difference in student learning. The next section provides a brief overview of technology use in education as viewed through three different frameworks.